Google is continuously crawling, indexing and de-indexing web pages in order to provide people with relevant search results for their queries.

Google itself has said in its Search Console Help Forum,

“Google may temporarily or permanently remove sites from its index and search results if it believes it is obligated to do so by law, if the sites do not meet Google’s quality guidelines, or for other reasons, such as if the sites detract from users’ ability to locate relevant information.”

That being said, you need to check your website weekly/monthly for index coverage issues and fix the issues immediately if you find them. Failing to do so may result in permanent de-indexing of web pages.

In today’s article, I’m going to discuss index coverage issues detected in Google Search Console and how to fix them.

After reading this step-by-step, brief guide you can successfully fix index coverage issues, even if you are new to Google Search Console.

Index Coverage Report:

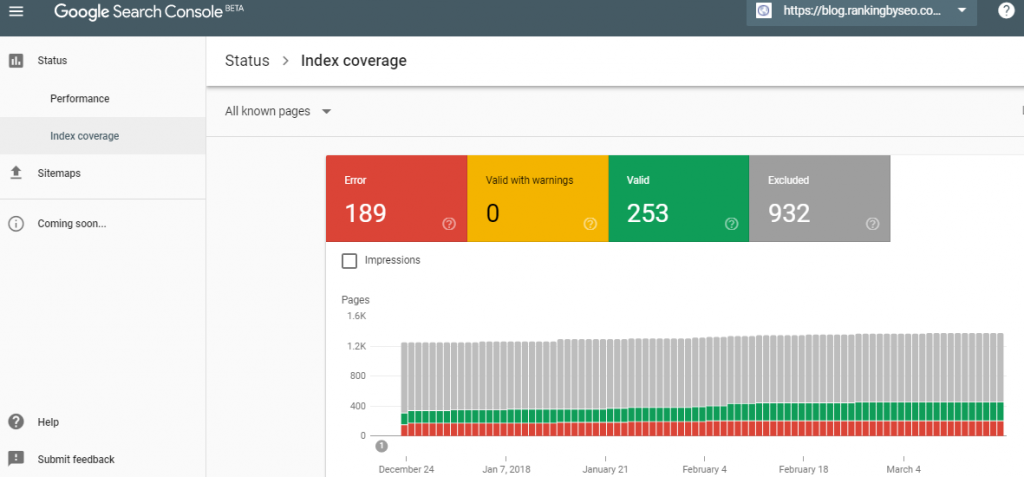

You need to open the index coverage report in your Google Search Console.

By clicking here, you can do it.

After opening index coverage report, the next step is to understand it.

How will you do that?

Here are the three things that you should look for:

- A spike in indexing errors

- A drop in total index pages without corresponding errors

- A significant number of your pages that are not indexed

Understanding Index Coverage Report

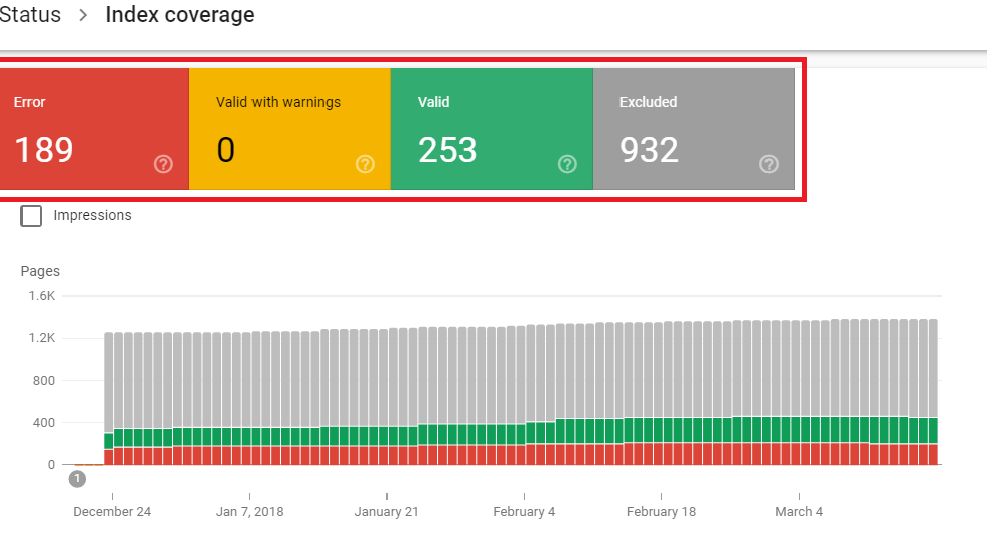

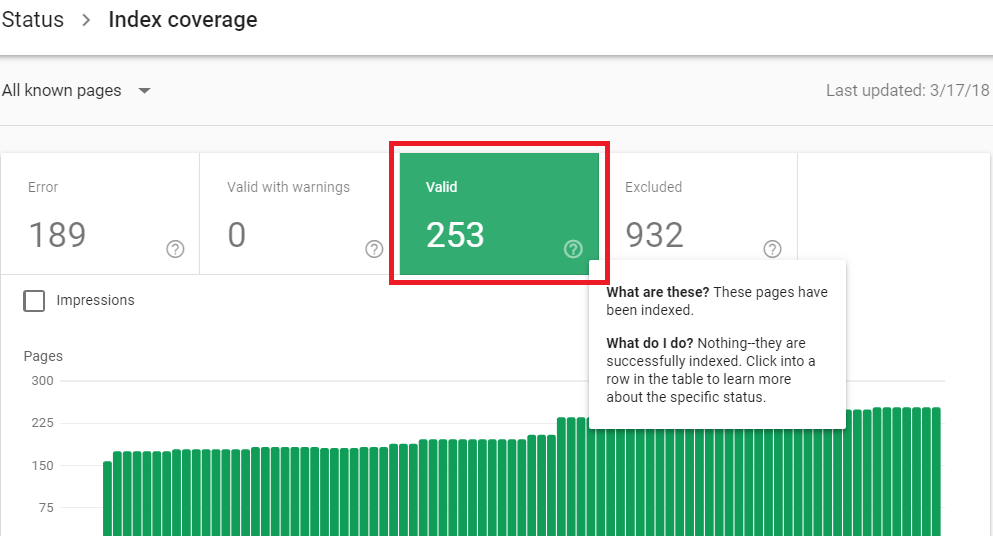

As you can see in the report, there are four sections – Error, Valid With Warnings, Valid, Excluded.

Let’s talk about what these four sections represent.

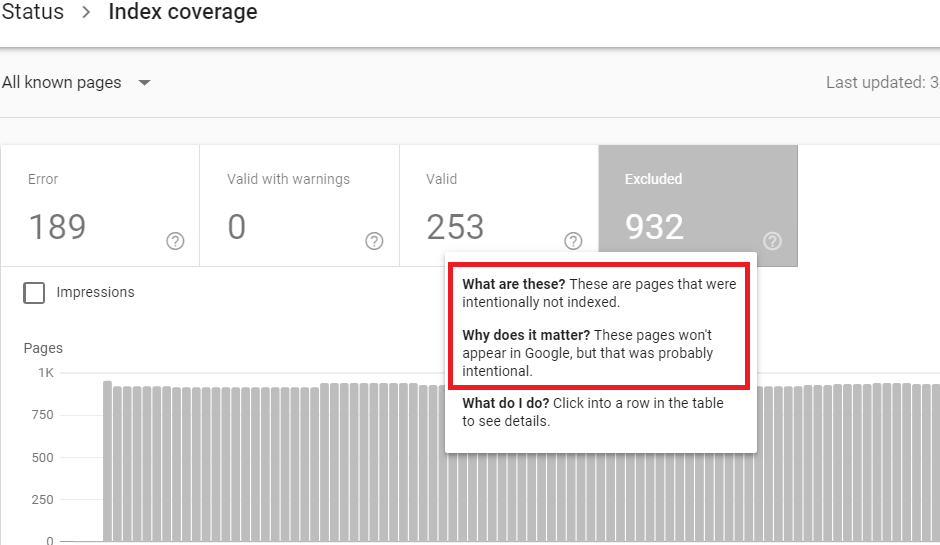

1- Excluded

In the Excluded section, you will see non indexed pages that you cannot typically affect as Google thinks your intention was not to get those pages indexed.

Why does it happen?

In some cases, a few pages that are in an intermediate stage of indexing process are categorized in this section. But in most cases, those pages that you have deliberately excluded (for example by noindex directive) find ways to this section.

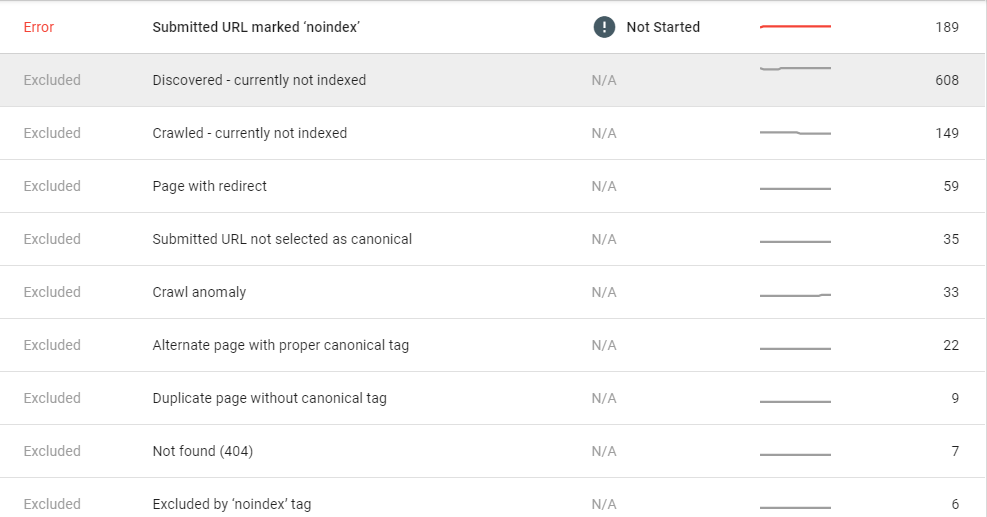

If you scroll down Index coverage report, you will find that Google also tells the reasons.

Here some common reasons why Google thinks you want these pages not to be indexed:

Blocked by noindex tag

If you want these pages to be indexed, just remove the noindex directive.

Blocked by page removal tools

Block By Robot.txt

Blocked due to unauthorized request (401)

If you want Google to index these pages, you should either allow Googlebot to access your page or remove authorization.

Crawl Anomaly

Run try fetching the page using Fetch as Google to find if it returns with any fetch issue.

Crawled – Currently Non Indexed

The pages may or may not be indexed in the future. You don’t need to submit again.

Discovered – Currently Non Index

Alternate page with proper canonical tag

Duplicate page without canonical tag

Duplicate non-HTML page

Google chose different canonical than user

Not found (404):

Page removed because of a legal complaint

Page with redirect

Queued for crawling

Soft 404

Submitted URL dropped

Submitted URL not selected as canonical

2- Valid

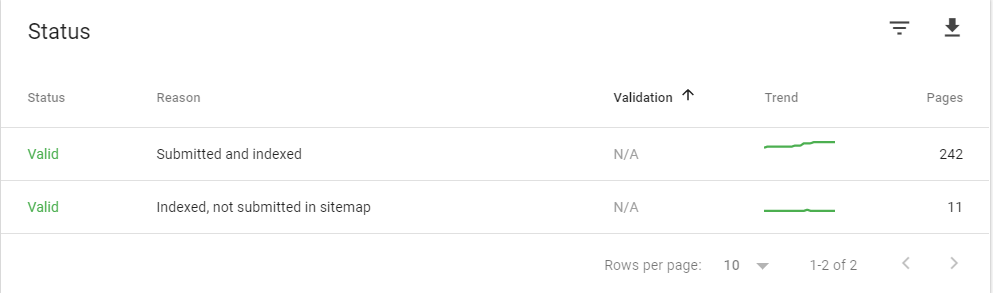

Pages those are categorized in the Valid Section are indexed ones. You will often see the following types of descriptions for indexed pages.

Submitted and indexed

These are the pages you submitted to Google for indexing and Google has honored your request.

Indexed, not submitted in sitemap

Google itself has discovered these URLs and indexed. Though these pages are indexed, Google recommends that you should submit all important URLs using a sitemap.

Indexed; consider marking as canonical

If you see the above description written, you should mark this URL as canonical as Google has found duplicate URLs of this URL.

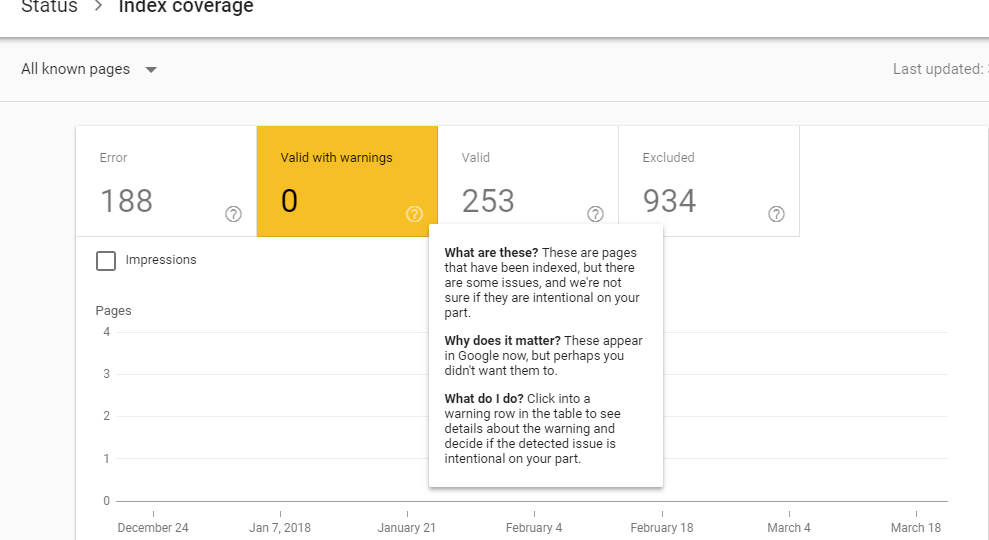

3- Valid With Warnings

Pages with warning status need your immediate attention. Though these pages have been indexed, they have some issues and Google doesn’t know whether these issues are intentional on your part.

You need to click on warning row to find out if these issues are intentional on your part.

Luckily, Ranking By SEO blog doesn’t have any index page with a warning.

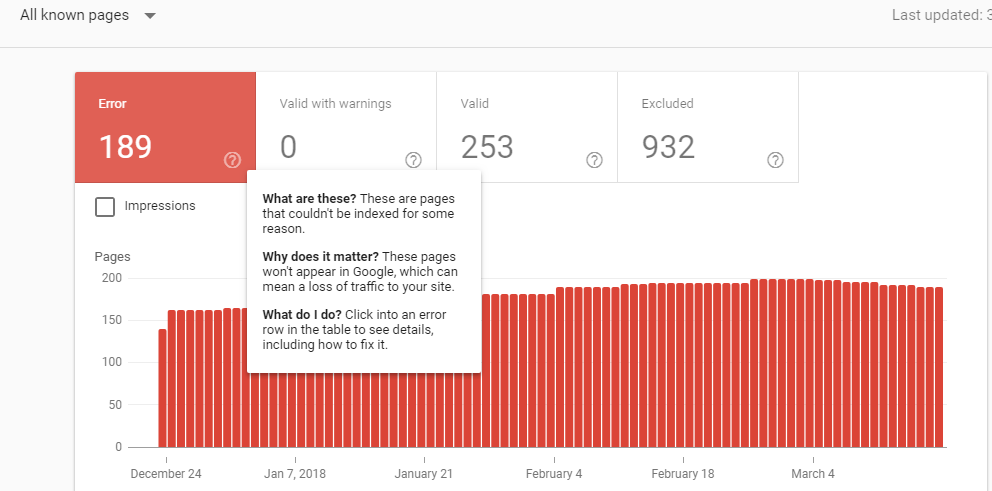

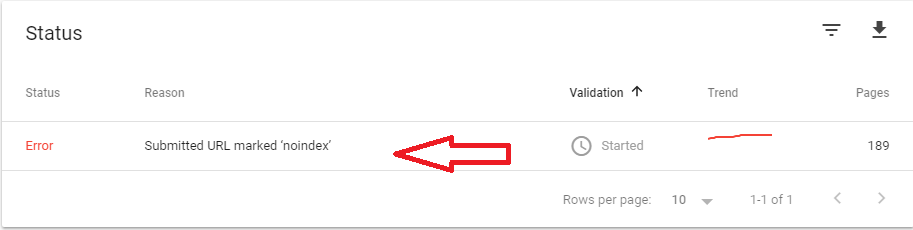

4- Error

In this section, you will see the pages that are not indexed for some reasons. By clicking an error row, you will find all about the error and how to fix it.

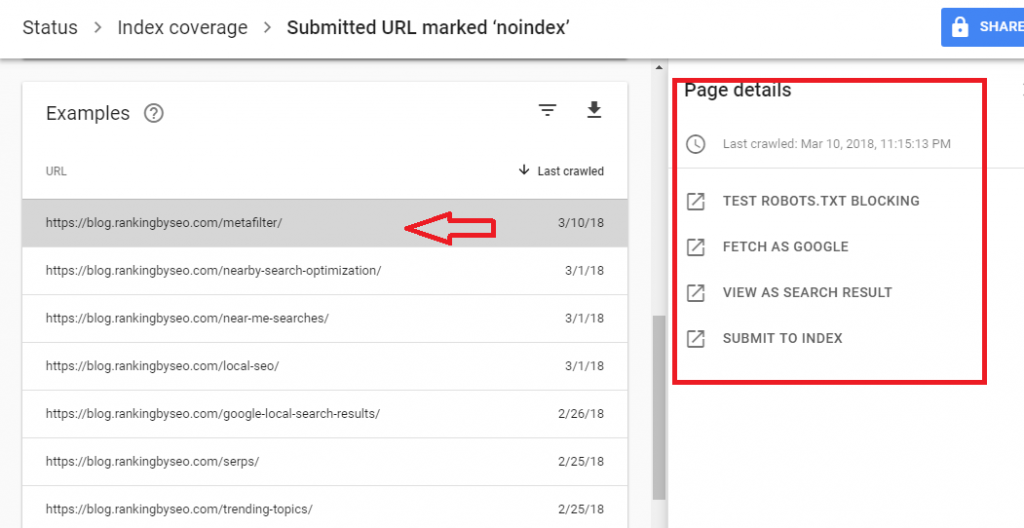

If you click on Submitted URL marked ‘noindex’, you will see the following window.

You will get the list of URLs with Page Details.

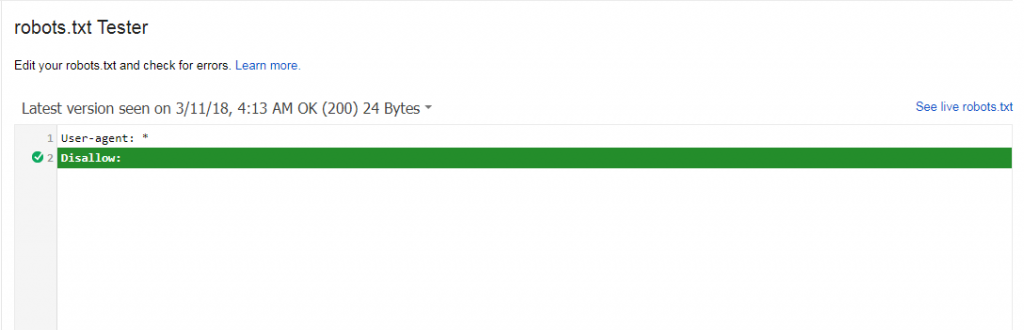

When I click on TEST Robots.TXT BLOCKING, I get the following result.

I have checked all the errors and found that I have blocked these URLs as they are category pages or tags on the blog.

It is advised that you should check all the error reason rows to find the cause.

Here are some common issues (that you will see in error rows) and their fixes:

Server error (5xx)

This error is caused by your server. Fix it, and your URL will be indexed.

Redirect error

According to Google, “It could be one of the following types: it was a redirect chain that was too long; it was a redirect loop; the redirect URL eventually exceeded the max URL length; there was a bad or empty URL in the redirect chain.”

Address the redirection issue if you want this URL to be indexed by Google.

Submitted URL blocked by robots.txt

This happens when you submit an URL (that is blocked by robot.txt) to Google. To fix this issue, allow robot.txt.

Submitted URL marked ‘noindex’

If you want to index pages with this error, you should remove noindex directive from the Meta tag or HTTP response.

Submitted URL seems to be a Soft 404

According to Google, “A soft 404 means that a URL on your site returns a page telling the user that the page does not exist and also a 200-level (success) code to the browser.”

Submitted URL returns unauthorized request (401)

This happens when Google receives a non authorized response. To fix this issue, either you should allow Googlebot to access your pages or you should remove authorization requirements.

Submitted URL not found (404)

This occurs when you submit a non-existent URL to Google.

Submitted URL has crawl issue

You have submitted URL to Google but Google found a crawling error. Solution? Try debugging your page using Fetch as Google.

Most often, Google itself tells you what you should do to address the issues associated with pages that are not indexed and fall in the Error section.

What You Should Do After You Fix Issues

Once you have fixed all the instances of a specific issue on your website, you can request Google to validate your changes.

Google Search Console tracks the validation state of each instance of an issue as well as the state of an issue as a whole. If all the instances of an issue are fixed, the issue is marketed as fixed in the status table and dropped to the bottom of the table.

Conclusion:

What about you? Did you find index coverage issues in Google Search Console? How did you fix them? Do share your thought or query in the comment section I’d love to know about it.

Which topic do you want Ranking By SEO to cover in its future posts? Let us know.

Its looking difficult. I am facing this problem today and i am so worried about it.

I hope i can resolve this problem after applying this method.

Thanks for this article.

It is getting difficult to resolve this error, but I am trying to do it another time after reading this post, I hope this time I will hit bull eye.

How to fix this error in blogger platform?

Hi Sooraj,

I would try to help you out with that. Could you please share GWT details with us so that we can help you with this further?

How to fix those errors??

If you are good at SEO and some of coding part, and also follow these tips properly, you should be able to do it. However, if you can’t do it yourself, you better hire someone good at all this.

how to fix this problem , my site have many many excluded page

Please follow the guide step by step. If you can’t still fix it yourself, please hire an expert to do it for you.

This is a very helpful article. Thanks for sharing this. I fix the error found at my website by following these steps.

Once again thank you very much.

Hi Chandan,

Very glad to know that you were able to fix issues using our guide.

Thank you Lalit Sir. It also happens to me and I am waiting for the result that what will happen next. but Also Thnks for Your Support.

Thanks!

Hmm it appears like your blog ate my first comment (it was super long) so I guess I’ll just sum it up what I wrote and say, I’m thoroughly enjoying your blog. I too am an aspiring blog writer but I’m still new to everything. Do you have any tips and hints for rookie blog writers? I’d certainly appreciate it.|

Sir mere kuch post crawl ho chuke hai lekin index nhi huae hai.. Kya koe Solution hai. Or

“Crawl but not currently index” ye Kya hai.

Thanks for sharing about index coverage issue. I’ve also received the message from Google webmaster for index coverage. Your tips will really help me to fix this issue.

Thanks. Glad to know that it helped.

Thank you for nice words.

When I first got the index coverage issues message, I was lost, have zero idea of what it meant……I finally got it all fixed thanks to you

Hello Lalit Sharma,

Thanks for share this article about index coverage issue I’m Already face this now days one more issue found webmaster image no index and remove all please give me suggestion ….

Thanks for sharing tips on coverage issues. I think it is not big problem. I’ve also received the message from Google webmaster for index coverage. Your tips will really help me to fix this issue.

Thanks for sharing useful information, I had 45 errors in my search console So I am thinking about how to fix this problem But now I have got an idea. So I will try to fix the problem if I am getting any problem I will come to your blog.

Thank you so much